I don’t know how many of my readers remember what “Y2K” was all about. It started 24 years ago in 1999. The story was that the world would end on January 1, 2000, because all computers would get scrambled and rename 2000 to 1900. There were all kinds of news sources that promoted that imminent catastrophe.

I was in an IT (Information Technology) organization and faced Y2K myself. What actually fixed this speculated problem was a couple of lines of code in the programs (now called apps) that dealt with dates. I think I solved this dilemma for the apps I authored in less than a day’s time. And of course, when that dreaded day actually occurred, it was little more than a blip on the radar screen.

The SciFi world, of which I am an avid member, has been speculating about this takeover of the world by computers for more than 50 years now. The first movie on this topic was in 1968 entitled “2001 – A Space Odyssey”. It went something like this.

After uncovering a mysterious artifact buried beneath the Lunar surface, a spacecraft is sent to Jupiter to find its origins – a spacecraft manned by two men and the supercomputer H.A.L. 9000. Somewhere along the way, HAL decided to take over.

Another movie made in 1970 which was one of my favorites was called “Colossus – The Corbin Project“.

The latest IT scare is “The Singularity” which is described as a hypothetical future point in time at which technological growth becomes uncontrollable and irreversible, resulting in unforeseeable changes to human civilization. An article in the June 11 New York Times, describes this latest scare (The Singularity) as well as I ever could.

The frenzy over artificial intelligence may be ushering in the long-awaited moment when technology goes wild. Or maybe it’s the hype that is out of control.

Part of the problem of separating hype from reality is that the engines driving this technology are becoming hidden. OpenAI, which began as a nonprofit using open-source code, is now a for-profit venture that critics say is effectively a black box. Google and Microsoft also offer limited visibility.

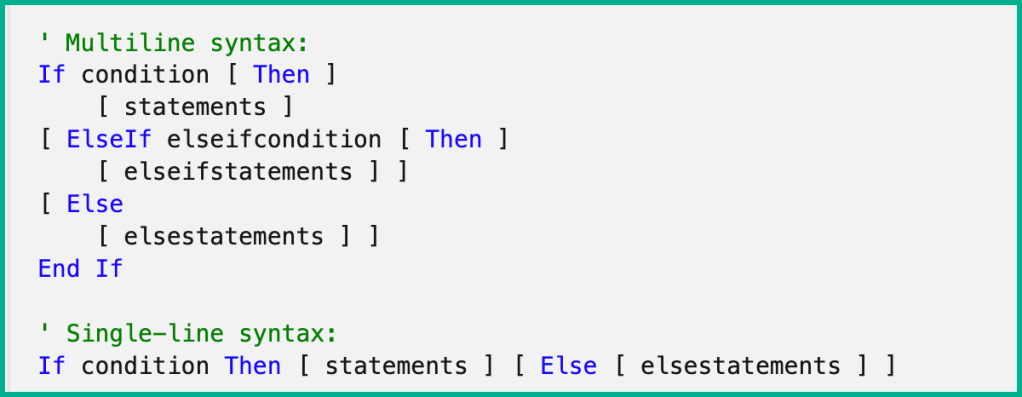

To me, a retired IT guy, I know that computers are controlled by code written by someone sitting at a desk somewhere. Even the smartest “super computers” today have the average self-thinking intelligence of a fourth-grader. Maybe in the distant future, there will be enough generated code for computers to appear to think for themselves. But, in reality, that self-thinking computer will actually be decided by a massive string of If-Else statements in the computer’s source code.

Here is an example:

The if/else statement executes a block of code if a specified condition is true. If the condition is false, another block of code would be executed.

If “this then do that else do this”

When the string of “ElseIf”s becomes infinitely long, it may appear that the computer is thinking for itself. But in reality, all it is doing is crunching 1 and zeroes as someone told it to.

We have been speculating about “The Singularity” for all of my lifetime, and we will continue to speculate long after I am gone. But, let’s not confuse the hype from reality when it comes to today.

Yes, I remember the whole Y2K panic which turned out to be a non-event. I did turn off whatever computer I owned at the time on New Year’s eve, waiting for any news the next morning on the death of civilization.

Humans are prone to assume the worst outcome, even when logic dictates otherwise. The building fear over AI fits that pattern.

LikeLiked by 1 person